That is an excerpt from Distant Warfare: Interdisciplinary Views. Get your free obtain from E-International Relations.

Using power exercised by the militarily most superior states within the final twenty years has been dominated by ‘distant warfare’, which, at its easiest, is a ‘technique of countering threats at a distance, with out the deployment of enormous army forces’ (Oxford Analysis Group cited in Biegon and Watts 2019, 1). Though distant warfare includes very completely different practices, tutorial analysis and the broader public pays a lot consideration to drone warfare as a really seen type of this ‘new’ interventionism. On this regard, analysis has produced vital insights into the assorted results of drone warfare in moral, authorized, political, but in addition social and financial contexts (Cavallaro, Sonnenberg and Knuckey 2012; Sauer and Schörnig 2012; Casey-Maslen 2012; Gregory 2015; Corridor and Coyne 2013; Schwarz 2016; Warren and Bode 2015; Gusterson 2016; Restrepo 2019; Walsh and Schulzke 2018). However present technological developments counsel an rising, game-changing position of synthetic intelligence (AI) in weapons programs, represented by the controversy on rising autonomous weapons programs (AWS). This improvement poses a brand new set of vital questions for worldwide relations, which pertain to the affect that more and more autonomous options in weapons programs can have on human decision-making in warfare – resulting in extremely problematic moral and authorized penalties.

In distinction to remote-controlled platforms akin to drones, this improvement refers to weapons programs which are AI-driven of their essential capabilities. That’s weapons that course of knowledge from on-board sensors and algorithms to ‘choose (i.e., seek for or detect, determine, observe, choose) and assault (i.e., use power in opposition to, neutralise, harm or destroy) targets with out human intervention’ (ICRC 2016). AI-driven options in weapons programs can take many alternative varieties however clearly depart from what could be conventionally understood as ‘killer robots’ (Sparrow 2007). We argue that together with AI in weapons programs is vital not as a result of we search to spotlight the looming emergence of absolutely autonomous machines making life and dying choices with none human intervention, however as a result of human management is more and more changing into compromised in human-machine interactions.

AI-driven autonomy has already grow to be a brand new actuality of warfare. We discover it, for instance, in aerial fight autos such because the British Taranis, in stationary sentries such because the South Korean SGR-A1, in aerial loitering munitions such because the Israeli Harop/Harpy, and in floor autos such because the Russian Uran-9 (see Boulanin and Verbruggen 2017). These various programs are captured by the (considerably problematic) catch-all class of autonomous weapons, a time period we use as a springboard to attract consideration to current types of human-machine relations and the position of AI in weapons programs wanting full autonomy.

The rising sophistication of weapons programs arguably exacerbates tendencies of technologically mediated types of distant warfare which were round for some many years. The decisive query is how new technological improvements in warfare affect human-machine interactions and more and more compromise human management. The purpose of our contribution is to research the importance of AWS within the context of distant warfare by discussing, first, their particular traits, notably with regard to the important facet of distance and, second, their implications for ‘significant human management’ (MHC), an idea that has gained rising significance within the political debate on AWS. We are going to think about MHC in additional element additional beneath.

We argue thatAWS enhance basic asymmetries in warfare and that they signify an excessive model of distant warfare in realising the potential absence of speedy human decision-making on deadly power. Moreover, we study the difficulty of MHC that has emerged as a core concern for states and different actors looking for to control AI-driven weapons programs. Right here, we additionally contextualise the present debate with state practices of distant warfare regarding programs which have already set precedents when it comes to ceding significant human management. We are going to argue that these incremental practices are prone to change use of power norms, which we loosely outline as requirements of applicable motion (see Bode and Huelss 2018). Our argument is subsequently much less about highlighting the novelty of autonomy, and extra about how practices of warfare that compromise human management grow to be accepted.

Autonomous Weapons Techniques and Asymmetries in Warfare

AWS enhance basic asymmetries in warfare by creating bodily, emotional and cognitivedistancing. First, AWS enhance asymmetry by creating bodily distance in utterly shielding their commanders/operators from bodily threats or from being on the receiving finish of any defensive makes an attempt. We don’t argue that the bodily distancing of combatants has began with AI-driven weapons programs. This want has traditionally been a typical function of warfare – and each army power has an obligation to guard its forces from hurt as a lot as doable,which some additionally current as an argument for remotely-controlled weapons (see Strawser 2010). Creating an asymmetrical scenario the place the enemy combatant is on the danger of harm whereas your personal forces stay secure is, in spite of everything, a fundamental want and goal of warfare.

However the technological asymmetry related to AI-driven weapon programs utterly disturbs the ‘ethical symmetry of mortal hazard’ (Fleischman 2015, 300) in fight and subsequently the inner morality of warfare. In any such ‘riskless warfare, […] the pursuit of asymmetry undermines reciprocity’ (Kahn 2002, 2). Following Kahn (2002, 4), the inner morality of warfare largely rests on ‘self-defence inside circumstances of reciprocal imposition of danger.’ Combatants are allowed to injure and kill one another ‘simply so long as they stand in a relationship of mutual danger’ (Kahn 2002, 3). If the morality of the battlefield depends on these logics of self-defence, that is deeply challenged by varied types of technologically mediated asymmetrical warfare. It has been voiced as a major concern specifically since NATO’s Kosovo marketing campaign (Der Derian 2009) and has since grown extra pronounced by way of the usage of drones and, specifically, AI-driven weapons programs that lower the affect of people on the speedy decision-making of utilizing power.

Second, AWS enhance asymmetry by creating an emotional distance from the brutal actuality of wars for many who are using them. Whereas the extreme surveillance of targets and close-range expertise of goal engagement by way of reside footage can create intimacy between operator and goal, this expertise is completely different from dwelling by way of fight. On the identical time, the follow of killing from a distance triggers a way of deep injustice and helplessness amongst these populations affected by the more and more autonomous use of power who’re ‘dwelling beneath drones’ (Cavallaro, Sonnenberg and Knuckey 2012). Students have convincingly argued that ‘the asymmetrical capacities of Western – and notably US forces – themselves create the circumstances for rising use of terrorism’ (Kahn 2002, 6), thus ‘protracting the battle reasonably than bringing it to a swifter and fewer bloody finish’ (Sauer and Schörnig 2012, 373; see additionally Kilcullen and McDonald Exum 2009; Oudes and Zwijnenburg 2011).

This distancing from the brutal actuality of warfare makes AWS interesting to casualty-averse, technologically superior states such because the USA, however probably alters the character of warfare. This additionally connects properly with different ‘danger switch paths’ (Sauer and Schörnig 2012, 369) related to practices of distant warfare that could be chosen to avert casualties, akin to the usage of personal army safety corporations or working by way of airpower and native allies on the bottom (Biegon and Watts 2017). Casualty aversion has been largely related to a democratic, largely Western, ‘post-heroic’ method of warfare relying on public opinion and the acceptance of utilizing power (Scheipers and Greiner 2014; Kaempf 2018). However studies concerning the Russian aerial help marketing campaign in Syria, for instance, converse of comparable tendencies of not looking for to place their very own troopers in danger (The Related Press 2018). Mandel (2004) has analysed this casualty aversion pattern in safety technique because the ‘quest for cold warfare’ however, on the identical time, famous that warfare nonetheless and at all times consists of the lack of lives – and that the supply of recent and ever extra superior applied sciences mustn’t cloud serious about this stark actuality.

Some states are conscious about this actuality as the continuing debate on the difficulty of AWS on the UN Conference on Sure Standard Weapons (UN-CCW) demonstrates. It’s price noting that the majority international locations in favour of banning autonomous weapons are creating international locations, that are usually much less prone to attend worldwide disarmament talks (Bode 2019). The truth that they’re prepared to talk out strongly in opposition to AWS makes their doing so much more important. Their historical past of experiencing interventions and invasions from richer, extra highly effective international locations (akin to a few of the ones in favour of AWS) additionally reminds us that they’re most in danger from this know-how.

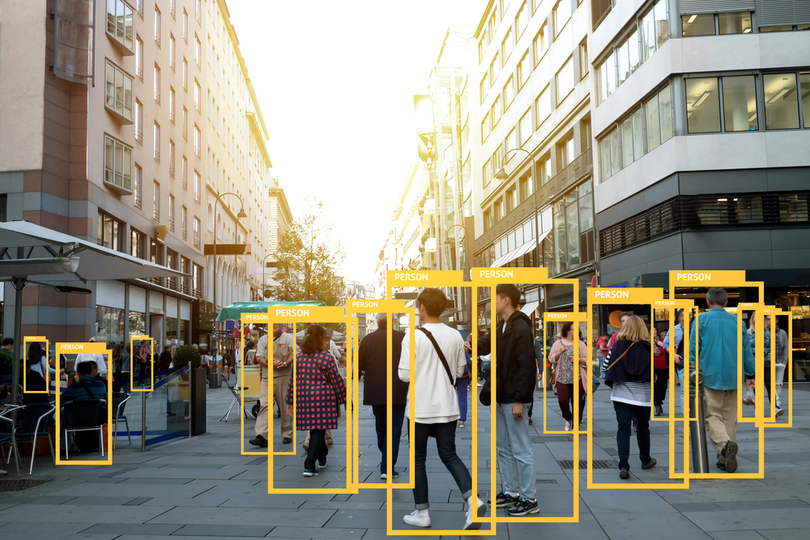

Third, AWS enhance cognitive distance by compromising the human capability to ‘doubt algorithms’ (see Amoore 2019) when it comes to knowledge outputs on the coronary heart of the focusing on course of. As people utilizing AI-driven programs encounter a scarcity of other info permitting them to substantively contest knowledge output, it’s more and more troublesome for human operators to doubt what ‘black field’ machines inform them. Their superior knowledge processing capability is precisely why goal identification by way of sample recognition in huge quantities of knowledge is ‘delegated’ to AI-driven machines, utilizing, for instance, machine-learning algorithms at completely different levels of the focusing on course of and in surveillance extra broadly.

However the extra goal acquisition and potential assaults are primarily based on AI-driven programs as know-how advances, the much less we appear to learn about how these choices are made. To determine potential targets, international locations such because the USA (e.g. SKYNET programme) already depend on meta-data generated by machine-learning options specializing in sample of life recognition (The Intercept 2015; see additionally Aradau and Blanke 2018). Nonetheless, the missing capability of people to retrace how algorithms make choices poses a severe moral, authorized and political downside. The inexplicability of algorithms makes it tougher for any human operator, even when offered a ‘veto’ or the ability to intervene ‘on the loop’ of the weapons system, to query metadata as the idea of focusing on and engagement choices. However these points, as former Assistant Secretary for Homeland Safety Coverage Stewart Baker put it, ‘metadata completely tells you all the pieces about any person’s life. When you’ve got sufficient metadata, you don’t actually need content material’, whereas Normal Michael Hayden, former director of the NSA and the CIA emphasises that ‘[w]e kill individuals primarily based on metadata’ (each quoted in Cole 2014).

The need to seek out (fast) technological fixes or options for the ‘downside of warfare’ has lengthy been on the coronary heart of debates on AWS. Now we have more and more seen this on the Group of Governmental Consultants on Deadly Autonomous Weapons Techniques (GGE) conferences on the UN-CCW in Geneva when international locations already creating such weapons spotlight their supposed advantages. These in favour of AWS (together with the USA, Australia and South Korea) have grow to be extra vocal than ever. The USA claimed that such weapons may really make it simpler to observe worldwide humanitarian legislation by making army motion extra exact (United States 2018). However it is a purely speculative argument at current, particularly in complicated, fast-changing contexts akin to city warfare. Key rules of worldwide humanitarian legislation require deliberate human judgements that machines are incapable of (Asaro 2018; Sharkey 2008). For instance, the authorized definition of who’s a civilian and who’s a combatant will not be written in a method that may very well be simply programmed into AI, and machines lack the situational consciousness and skill to deduce issues essential to make this determination (Sharkey 2010).

But, some states appear to fake that these intricate and complicated points are simply solvable by way of programming AI-driven weapons programs in simply the proper method. This feeds the technological ‘solutionism’ (Morozov 2014) narrative that doesn’t seem to just accept that some issues shouldn’t have technological options as a result of they’re inherently political in nature. So, fairly other than whether or not it’s technologically doable, do we wish, normatively, to take out deliberate human decision-making on this method?

This brings us to our second set of arguments involved with the elemental questions that introducing AWS into practices of distant warfare pose to human-machine interplay.

The Downside of Significant Human Management

AI-driven programs sign the potential absence of speedy human decision-making on deadly power and the rising lack of so-called significant human management (MHC). The idea of MHC has grow to be a central focus of the continuing transnational debate on the UN-CCW. Initially coined by the non-governmental organisation (NGO) Article 36 (Article 36 2013, 36; see Roff and Moyes 2016), there are completely different understandings of what significant human management implies (Ekelhof 2019). It guarantees resolving the difficulties encountered when trying to outline exactly what autonomy in weapons programs is but in addition meets considerably related issues in its definition of key ideas. Roff and Moyes (2016, 2–3) counsel a number of elements that may improve human management over know-how: know-how is meant to be predictable, dependable, clear; customers ought to have correct info; there’s well timed human motion and a capability for well timed intervention, in addition to human accountability. These elements underline the complicated calls for that may very well be vital for sustaining MHC however how these elements are linked and what diploma of predictability or reliability, for instance, are essential to make human management significant stays unclear and these components are underdefined.

On this regard, many states think about the appliance of violent power with none human management as unacceptable and morally reprehensible. However there’s much less settlement about varied complicated types of human-machine interplay and at what level(s) human management ceases to be significant. Ought to people at all times be concerned in authorising actions or is monitoring such actions with the choice to veto and abort adequate? Is significant human management realised by engineering weapons programs and AI in sure methods? Or, extra basically, is human management that consists of merely executing choices primarily based on indications from a pc that aren’t accessible to human reasoning as a result of ‘black-boxed’ nature of algorithmic processing significant? The noteworthy level about MHC as a norm within the context of AWS can be that it has lengthy been compromised in several battlefield contexts. Complicated human-machine interactions should not a current phenomenon – even the extent to which human management in a fighter jet is significant is questionable (Ekelhof 2019).

Nonetheless, the makes an attempt to determine MHC as an rising norm meant to control AWS are troublesome. Certainly, over the previous 4 years of debate within the UN-CCW, some states, supported by civil society organisations, have advocated introducing new authorized norms to ban absolutely autonomous weapons programs, whereas different states depart the sector open to be able to enhance their room of manoeuvre. As discussions drag on with little substantial progress, the operational pattern in direction of creating AI-enabled weapons programs continues and is on observe to changing into established as ‘the brand new regular’ in warfare (P. W. Singer 2010). For instance, in its Unmanned Techniques Built-in Roadmap 2013–2038, the US Division of Defence units out a concrete plan to develop and deploy weapons with ever rising autonomous options within the air, on land, and at sea within the subsequent 20 years (US Division of Protection 2013).

Whereas the US technique on autonomy is probably the most superior, a majority of the highest ten arms exporters, together with China and Russia, are creating or planning to develop some type of AI-driven weapon programs. Media studies have repeatedly pointed to the profitable inclusion of machine studying strategies in weapons programs developed by Russian arms maker Kalashnikov, coming alongside President Putin’s much-publicised quote that ‘whoever leads in AI will rule the world’ (Busby 2018; Vincent 2017). China has reportedly made advances in creating autonomous floor autos (Lin and Singer 2014) and, in 2017, revealed an ambitiously worded government-led plan on AI with decisively elevated monetary expenditure (Metz 2018; Kania 2018).

The intention to control the follow of utilizing power by setting norms stalls on the UN-CCW, however we spotlight the significance of a reverse and sure situation: practices shaping norms. These dynamics level to a probably influential trajectory AWS might take in direction of altering what’s applicable in terms of the usage of power, thereby additionally reworking worldwide norms governing the usage of violent power.

Now we have already seen how the supply of drones has led to adjustments in how states think about using power. Right here, entry to drone know-how seems to have made focused killing appear an appropriate use of power for some states, thereby deviating considerably from earlier understandings (Haas and Fischer 2017; Bode 2017; Warren and Bode 2014). Of their utilization of drone know-how, states have subsequently explicitly or implicitly pushed novel interpretations of key requirements of worldwide legislation governing the usage of power, akin to attribution and imminence. These practices can’t be captured with the standard conceptual language of customary worldwide legislation if they don’t seem to be overtly mentioned or just don’t quantity to its tight necessities, akin to changing into ‘uniform and wide-spread’ in state follow or manifesting in a persistently said perception within the applicability of a specific rule. However these practices are important as they’ve arguably led to the emergence of a sequence of gray areas in worldwide legislation when it comes to shared understandings of worldwide legislation governing the usage of power (Bhuta et al. 2016). The ensuing lack of readability results in a extra permissive surroundings for utilizing power: justifications for its use can extra ‘simply’ be discovered inside these more and more elastic areas of worldwide legislation.

We subsequently argue that we are able to examine how worldwide norms concerning utilizing AI-driven weapons programs emerge and alter from the bottom-up, by way of deliberative and non-deliberative practices. Deliberative practices as methods of doing issues may be the result of reflection, consideration or negotiation. Non-deliberative practices, in distinction, check with operational and usually non-verbalised practices undertaken within the strategy of creating, testing and deploying autonomous applied sciences.

We’re at present witnessing, as described above, an effort to probably make new norms concerning AI-driven weapons applied sciences on the UN-CCW by way of deliberative practices. However on the identical time, non-deliberative and non-verbalised practices are always undertaken as properly and concurrently form new understandings of appropriateness. These non-deliberative practices might stand in distinction to the deliberative practices centred on trying to formulate a (consensus) norm of significant human management.

This doesn’t solely have repercussions for programs at present in several levels of improvement and testing, but in addition for programs with restricted AI-driven capabilities which were in use for the previous two to 3 many years akin to cruise missiles and air defence programs. Most air defence programs have already got important autonomy within the focusing on course of and army aircrafts have extremely automatised options (Boulanin and Verbruggen 2017). Arguably, non-deliberative practices surrounding these programs have already created an understanding of what significant human management is. There may be, then, already a norm, within the sense of an rising understanding of appropriateness, emanating from these practices that has not been verbally enacted or mirrored on. This makes it tougher to deliberatively create a brand new significant human management norm.

Pleasant hearth incidents involving the US Patriot system can serve for instance right here. In 2003, a Patriot battery stationed in Iraq downed a British Royal Airforce Twister that had been mistakenly recognized as an Iraqi anti-radiation missile. Notably, ‘[t]he Patriot system is almost autonomous, with solely the ultimate launch determination requiring human interplay’ (Missile Protection Mission 2018). The 2003 incident demonstrates the extent to which even a comparatively easy weapons system – comprising of components akin to radar and a lot of automated capabilities meant to help human operators – deeply compromises an understanding of MHC the place a human operator has all required info to make an impartial, knowledgeable determination that may contradict technologically generated knowledge.

Whereas people had been clearly ‘within the loop’ of the Patriot system, they lacked the required info to doubt the system’s info competently and had been subsequently mislead: ‘[a]ccording to a abstract of a report issued by a Pentagon advisory panel, Patriot missile programs used throughout battle in Iraq got an excessive amount of autonomy, which seemingly performed a job within the unintentional downings of pleasant plane’ (Singer 2005). This instance ought to be seen within the context of different, well-known incidents such because the 1988 downing of Iran Air flight 655 as a result of a deadly failure of the human-machine interplay of the Aegis system on board the USS Vincennes or the essential intervention of Stanislav Petrov who rightly doubted info offered by the Soviet missile defence system reporting a nuclear weapons assault (Aksenov 2013). A 2016 incident in Nagorno-Karabakh supplies one other instance of a system with autonomous anti-radar mode utilized in fight: Azerbaijan reportedly used an Israeli-made Harop ‘suicide drone’ to assault a bus of allegedly Armenian army volunteers, killing seven (Gibbons-Neff 2016). The Harop is a loitering munition capable of launch autonomous assaults.

General, these examples level to the significance of focusing on for contemplating the autonomy in weapons programs. There are at present at the very least 154 weapons programs in use the place the focusing on course of, comprising ‘identification, monitoring, prioritisation and choice of targets to, in some circumstances, goal engagement’ is supported by autonomous options (Boulanin and Verbruggen 2017, 23). The issue we emphasise right here pertains to not the completion of the focusing on cycle with none human intervention, however already emerges within the help performance of autonomous options. Historic and newer examples present that, right here, human management is already typically removed from what we might think about as significant. It’s famous, for instance, that ‘[t]he S-400 Triumf, a Russian-made air defence system, can reportedly observe greater than 300 targets and interact with greater than 36 targets concurrently’ (Boulanin and Verbruggen 2017, 37). Is it doable for a human operator to meaningfully supervise the operation of such programs?

But, the obvious lack/compromised type of human management is outwardly thought of as acceptable: neither the usage of the Patriot system has been questioned in relation to deadly incidents neither is the S-400 contested for that includes an ‘unacceptable’ type of compromised human management. On this sense, the wider-spread utilization of such air defence programs over many years has already led to new understandings of ‘acceptable’ MHC and human-machine interplay, triggering the emergence of recent norms.

Nonetheless, questions concerning the nature and high quality of human management raised by these current programs should not a part of the continuing dialogue on AWS amongst states on the UN-CCW. In truth, states utilizing automated weapons proceed to actively exclude them from the controversy by referring to them as ‘semi-autonomous’ or so-called ‘legacy programs.’ This omission prevents the worldwide group from taking a more in-depth take a look at whether or not practices of utilizing these programs are basically applicable.

Conclusion

To conclude, we want to come again to the important thing query inspiring our contribution: to what extent will AI-driven weapons programs form and remodel worldwide norms governing the usage of (violent) power?

In addressing this query, we must also keep in mind who has company on this course of. Governments can (and may) resolve how they wish to information this course of reasonably than presenting a specific trajectory of the method as inevitable or framing technological progress of a sure sort as inevitable. This requires an express dialog concerning the values, ethics, rules and decisions that ought to restrict and information the event, position and the prohibition of sure sorts of AI-driven safety applied sciences in gentle of requirements for applicable human-machine interplay.

Applied sciences have at all times formed and altered warfare and subsequently how power is used and perceived (Ben-Yehuda 2013; Farrell 2005). But, the position that know-how performs shouldn’t be conceived in deterministic phrases. Somewhat, know-how is ambivalent, making how it’s utilized in worldwide relations and in warfare a political query. We wish to spotlight right here the ‘Collingridge dilemma of management’ (see Genus and Stirling 2018) that speaks of a typical trade-off between realizing the affect of a given know-how and the convenience of influencing its social, political, and innovation trajectories. Collingridge (1980, 19) said the next:

Making an attempt to regulate a know-how is troublesome […] as a result of throughout its early levels, when it may be managed, not sufficient may be recognized about its dangerous social penalties to warrant controlling its improvement; however by the point these penalties are obvious, management has grow to be expensive and sluggish.

This describes the scenario aptly that we discover ourselves in concerning AI-driven weapon applied sciences. We’re nonetheless at an preliminary, improvement stage of those applied sciences. Not many programs are in operation which have important AI-capacities. This makes it probably tougher to evaluate what the exact penalties of their use in distant warfare will probably be.The multi-billion investments made in varied army purposes of AI by, for instance, the USA does counsel the rising significance and essential future position of AI. On this context, human management is lowering and the subsequent era of drones on the core of distant warfare because the follow of distance fight will incorporate extra autonomous options. If technological developments proceed at this tempo and the worldwide group fails to ban and even regulate autonomy in weapons programs, AWS are prone to play a significant position within the distant warfare of the nearer future.

On the identical time, we’re nonetheless very a lot within the stage of technological improvement the place steering is feasible, cheaper, more easy, and fewer time-consuming – which is exactly why it’s so vital to have these wider, essential conversations concerning the penalties AI for warfare now.

References

Aksenov, Paul. 2013. ‘Stanislav Petrov: The Man Who Might Have Saved the World.’ BBC Russian. September.

Amoore, Louise. 2019. ‘Uncertain Algorithms: Of Machine Studying Truths and Partial Accounts.’ Principle, Tradition and Society, 36(6): 147–169.

Aradau, Claudia, and Tobias Blanke. 2018. ‘Governing Others: Anomaly and the Algorithmic Topic of Safety.’ European Journal of Worldwide Safety, 3(1): 1–21. https://doi.org/10.1017/eis.2017.14

Article 36. 2013. ‘Killer Robots: UK Authorities Coverage on Totally Autonomous Weapons.’ http://www.article36.org/weapons-review/killer-robots-uk-government-policy-on-fully-autonomous-weapons-2/

Asaro, Peter. 2018. ‘Why the World Must Regulate Autonomous Weapons, and Quickly.’ Bulletin of the Atomic Scientists (weblog). 27 April. https://thebulletin.org/landing_article/why-the-world-needs-to-regulate-autonomous-weapons-and-soon/

Ben-Yehuda, Nachman. 2013. Atrocity, Deviance, and Submarine Warfare. Ann Arbor, MI: College of Michigan Press. https://doi.org/10.3998/mpub.5131732

Bhuta, Nehal, Susanne Beck, Robin Geiss, Hin-Yan Liu, and Claus Kress, eds. 2016. Autonomous Weapons Techniques: Regulation, Ethics, Coverage. Cambridge: Cambridge College Press.

Biegon, Rubrick, and Tom Watts. 2017. ‘Defining Distant Warfare: Safety Cooperation.’ Oxford Analysis Group.

———. 2019. ‘Conceptualising Distant Warfare: Safety Cooperation.’ Oxford Analysis Group.

Bode, Ingvild. 2017. ‘”Manifestly Failing” and “Unable or Unwilling” as Intervention Formulation: A Essential Evaluation.’ In Rethinking Humanitarian Intervention within the twenty first Century, edited by Aiden

Warren and Damian Grenfell. Edinburgh: Edinburgh College Press: 164–91.

——— 2019. ‘Norm-Making and the International South: Makes an attempt to Regulate Deadly Autonomous Weapons Techniques.’ International Coverage, 10(3): 359–364.

Bode, Ingvild, and Hendrik Huelss. 2018. ‘Autonomous Weapons Techniques and Altering Norms in Worldwide Relations.’ Assessment of Worldwide Research, 44(3): 393–413.

Boulanin, Vincent, and Maaike Verbruggen. 2017. ‘Mapping the Growth of Autonomy in Weapons Techniques.’ Stockholm: Stockholm Worldwide Peace Analysis Institute. https://www.sipri.org/sites/default/files/2017-11/siprireport_mapping_the_development_of_autonomy_in_weapon_systems_1117_1.pdf

Busby, Mattha. 2018. ‘Killer Robots: Stress Builds for Ban as Governments Meet.’ The Guardian, 9 April 9. sec. Know-how. https://www.theguardian.com/technology/2018/apr/09/killer-robots-pressure-builds-for-ban-as-governments-meet

Casey-Maslen, Stuart. 2012. ‘Pandora’s Field? Drone Strikes beneath Jus Advert Bellum, Jus in Bello, and Worldwide Human Rights Regulation.’ Worldwide Assessment of the Purple Cross, 94(886): 597–625.

Cavallaro, James, Stephan Sonnenberg, and Sarah Knuckey. 2012. ‘Residing Beneath Drones: Dying, Harm and Trauma to Civilians from US Drone Practices in Pakistan.’ Worldwide Human Rights and Battle Decision Clinic, Stanford Regulation Faculty/NYU Faculty of Regulation, International Justice Clinic. https://law.stanford.edu/publications/living-under-drones-death-injury-and-trauma-to-civilians-from-us-drone-practices-in-pakistan/

Cole, David. 2014. ‘We Kill Folks Primarily based on Metadata.’ The New York Assessment of Books (weblog). 10 Might. https://www.nybooks.com/daily/2014/05/10/we-kill-people-based-metadata/

Collingridge, David. 1980. The Social Management of Know-how. London: Frances Pinter.

Der Derian, James. 2009. Virtuous Struggle: Mapping the Navy-Industrial-Media-Leisure Community. 2nd ed. New York: Routledge.

Ekelhof, Merel. 2019. ‘Transferring Past Semantics on Autonomous Weapons: Significant Human Management in Operation.’ International Coverage, 10(3): 343–348. https://doi.org/10.1111/1758-5899.12665

Farrell, Theo. 2005. The Norms of Struggle: Cultural Beliefs and Trendy Battle. Boulder: Lynne Rienner Publishers.

Fleischman, William M. 2015. ‘Simply Say “No!” To Deadly Autonomous Robotic Weapons.’ Journal of Data, Communication and Ethics in Society, 13(3/4): 299–313.

Genus, Audley, and Andy Stirling. 2018. ‘Collingridge and the Dilemma of Management: In the direction of Accountable and Accountable Innovation.’ Analysis Coverage, 47(1): 61–69.

Gibbons-Neff, Thomas. 2016. ‘Israeli-Made Kamikaze Drone Noticed in Nagorno-Karabakh Battle.’ The Washington Put up. 5 April. https://www.washingtonpost.com/news/checkpoint/wp/2016/04/05/israeli-made-kamikaze-drone-spotted-in-nagorno-karabakh-conflict/?utm_term=.6acc4522477c

Gregory, Thomas. 2015. ‘Drones, Focused Killings, and the Limitations of Worldwide Regulation.’ Worldwide Political Sociology, 9(3): 197–212.

Gusterson, Hugh. 2016. Drone: Distant Management Warfare. Cambridge, MA/London: MIT Press.

Haas, Michael Carl, and Sophie-Charlotte Fischer. 2017. ‘The Evolution of Focused Killing Practices: Autonomous Weapons, Future Battle, and the Worldwide Order.’ Up to date Safety Coverage, 38(2): 281–306.

Corridor, Abigail R., and Christopher J. Coyne. 2013. ‘The Political Financial system of Drones.’ Defence and Peace Economics, 25(5): 445–60.

ICRC. 2016. ‘Views of the Worldwide Committee of the Purple Cross (ICRC) on Autonomous Weapon Techniques.’ https://www.icrc.org/en/document/views-icrc-autonomous-weapon-system

Kaempf, Sebastian. 2018. Saving Troopers or Civilians? Casualty-Aversion versus Civilian Safety in Uneven Conflicts. Cambridge: Cambridge College Press.

Kahn, Paul W., 2002. ‘The Paradox of Riskless Warfare.’ Philosophy and Public Coverage Quarterly, 22(3): 2–8.

Kania, Elsa. 2018. ‘China’s AI Agenda Advances.’ The Diplomat. 14 February. https://thediplomat.com/2018/02/chinas-ai-agenda-advances/

Kilcullen, David, and Andrew McDonald Exum. 2009. ‘Dying From Above, Outrage Down Under.’ The New York Instances. 17 Might.

Lin, Jeffrey, and Peter W. Singer. 2014. ‘Chinese language Autonomous Tanks: Driving Themselves to a Battlefield Close to You?’ Widespread Science. 7 October. https://www.popsci.com/blog-network/eastern-arsenal/chinese-autonomous-tanks-driving-themselves-battlefield-near-you

Mandel, Robert. 2004. Safety, Technique, and the Quest for Cold Struggle. Boulder, CO: Lynne Rienner Publishers.

Metz, Cade. 2018. ‘As China Marches Ahead on A.I., the White Home Is Silent.’ The New York Instances. 12 February. sec. Know-how. https://www.nytimes.com/2018/02/12/technology/china-trump-artificial-intelligence.html

Missile Protection Mission. 2018. ‘Patriot.’ Missile Menace. https://missilethreat.csis.org/system/patriot/

Morozov, Evgeny. 2014. To Save The whole lot, Click on Right here: Know-how, Solutionism and the Urge to Repair Issues That Don’t Exist. London: Penguin Books.

Oudes, Cor, and Wim Zwijnenburg. 2011. ‘Does Unmanned Make Unacceptable? Exploring the Debate on Utilizing Drones and Robots in Warfare.’ IKV Pax Christi.

Restrepo, Daniel. 2019. ‘Bare Troopers, Bare Terrorists, and the Justifiability of Drone Warfare:’ Social Principle and Observe, 45(1): 103–26.

Roff, Heather M., and Richard Moyes. 2016. ‘Significant Human Management, Synthetic Intelligence and Autonomous Weapons. Briefing Paper Ready for the Casual Assembly of Consultants on Deadly Autonomous Weapons Techniques. UN Conference on Sure Standard Weapons.’

Sauer, Frank, and Niklas Schörnig. 2012. ‘Killer Drones: The ‘Silver Bullet’ of Democratic Warfare?’ Safety Dialogue, 43(4): 363–80.

Scheipers, Sibylle, and Bernd Greiner, eds. 2014. Heroism and the Altering Character of Struggle: Towards Put up-Heroic Warfare? Houndmills: Palgrave Macmillan.

Schwarz, Elke. 2016. ‘Prescription Drones: On the Techno-Biopolitical Regimes of Up to date ’moral Killing.’ Safety Dialogue, 47(1): 59–75.

Sharkey, Noel. 2008. ‘The Moral Frontiers of Robotics.’ Science, 322(5909): 1800–1801.

Sharkey, Noel. 2010. ‘Saying ‘No!’ To Deadly Autonomous Concentrating on.’ Journal of Navy Ethics, 9(4): 369–83.

Singer, Jeremy. 2005. ‘Report Cites Patriot Autonomy as a Think about Pleasant Hearth Incidents.’ SpaceNews.Com. 14 March. https://spacenews.com/report-cites-patriot-autonomy-factor-friendly-fire-incidents/

Singer, Peter W., 2010. Wired for Struggle. The Robotics Revolution and Battle within the twenty first Century. New York: Penguin.

Sparrow, Robert. 2007. ‘Killer Robots.’ Journal of Utilized Philosophy, 24(1): 62–77.

Strawser, Bradley Jay. 2010. ‘Ethical Predators: The Obligation to Make use of Uninhabited Aerial Automobiles.’ Journal of Navy Ethics, 9(4): 342–68.

The Related Press. 2018. ‘Tens of 1000’s of Russian Troops Have Fought in Syria since 2015.’ Haaretz. 22 August. https://www.haaretz.com/middle-east-news/syria/tens-of-thousands-of-russian-troops-have-fought-in-syria-since-2015-1.6409649

The Intercept. 2015. ‘SKYNET: Courier Detection by way of Machine Studying – The Intercept.’ 2015. https://theintercept.com/document/2015/05/08/skynet-courier/

United States. 2018. ‘Human-Machine Interplay within the Deveopment, Deployment and Use of Rising Applied sciences within the Space of Deadly Autonomous Weapons Techniques. UN Doc CCW/GGE.2/2018/WP.4.’ https://www.unog.ch/80256EDD006B8954/(httpAssets)/D1A2BA4B7B71D29FC12582F6004386EF/$file/2018_GGE+LAWS_August_Working+Paper_US.pdf

US Division of Protection. 2013. ‘Unmanned Techniques Built-in Roadmap: FY2013-2038.’ https://info.publicintelligence.net/DoD-UnmannedRoadmap-2013.pdf

Vincent, James. 2017. ‘Putin Says the Nation That Leads in AI ‘Will Be the Ruler of the World.’’ The Verge. 4 September. https://www.theverge.com/2017/9/4/16251226/russia-ai-putin-rule-the-world

Walsh, James Igoe, and Marcus Schulzke. 2018. Drones and Help for the Use of Drive. Ann Arbor: College of Michigan Press.

Warren, Aiden, and Ingvild Bode. 2014. Governing the Use-of-Drive in Worldwide Relations. The Put up-9/11 US Problem on Worldwide Regulation. Basingstoke: Palgrave Macmillan.

———. 2015. ‘Altering the Taking part in Subject: The US Redefinition of the Use-of-Drive.’ Up to date Safety Coverage, 36 (2): 174–99. https://doi.org/10.1080/13523260.2015.1061768